Imagine receiving a video call from your company’s CEO asking for an urgent financial transfer. The voice sounds real. The face looks authentic. Everything appears legitimate—until you realize later it was never the CEO at all.

This is the dangerous reality of deepfake attacks in cybercrime.

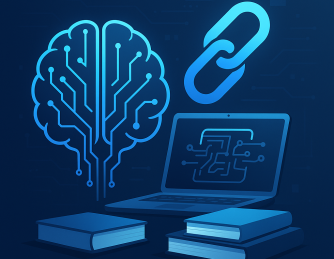

Deepfake technology uses artificial intelligence to generate highly realistic fake audio, video, and images. While the technology has legitimate uses in entertainment and media, cybercriminals are increasingly exploiting it for fraud, identity theft, misinformation, and social engineering attacks.

Recent cybersecurity reports show that deepfake-related fraud attempts are rising dramatically worldwide, targeting businesses, financial institutions, and individuals.

In this guide, we’ll explore how deepfake attacks work, why they are becoming a serious cybersecurity threat, and how organizations can protect themselves from this emerging form of digital deception.

What Are Deepfakes?

Deepfakes are AI-generated synthetic media created using deep learning models. These models analyze large amounts of images, videos, and audio recordings to mimic a person’s voice, facial expressions, and mannerisms.

The term “deepfake” comes from:

-

Deep learning

-

Fake media

How Deepfakes Work

Deepfake technology typically uses machine learning techniques such as:

-

Generative Adversarial Networks (GANs)

-

Neural networks

-

Voice synthesis models

-

Facial mapping algorithms

These technologies allow AI systems to produce hyper-realistic content that appears genuine.

Why Deepfake Attacks Are Increasing

Deepfake technology has become more accessible due to the availability of AI tools and open-source software.

Several factors contribute to the rise of deepfake cybercrime.

Easy Access to AI Tools

Today, cybercriminals can access powerful AI tools that generate deepfake content with minimal technical expertise.

Abundant Public Data

Social media platforms provide attackers with ample images, videos, and voice recordings needed to train deepfake models.

Increased Digital Communication

Businesses rely heavily on:

-

Video meetings

-

Voice calls

-

Remote collaboration tools

This creates opportunities for attackers to impersonate trusted individuals.

Types of Deepfake Attacks in Cybercrime

Deepfake attacks come in various forms, each targeting different vulnerabilities within organizations and individuals.

1. Deepfake CEO Fraud

One of the most dangerous deepfake scams involves impersonating company executives.

How It Works

Attackers create realistic audio or video impersonations of CEOs or senior executives and instruct employees to:

-

Transfer money

-

Share confidential data

-

Approve fraudulent transactions

This attack combines deepfake technology with business email compromise (BEC) tactics.

2. Identity Theft Using Deepfakes

Deepfakes can be used to bypass identity verification systems.

Examples

Attackers may generate fake videos or voice recordings to:

-

Pass biometric authentication

-

Access banking accounts

-

Manipulate identity verification systems

This is particularly concerning for systems using facial recognition or voice authentication.

3. Disinformation Campaigns

Deepfakes can be used to create fake videos of public figures saying or doing things they never did.

This can lead to:

-

Political misinformation

-

Corporate reputation damage

-

Public panic

In corporate environments, such attacks can damage brand trust and market confidence.

4. Social Engineering Attacks

Cybercriminals often combine deepfakes with traditional phishing attacks.

Example Scenario

An employee receives a video message from someone appearing to be their manager requesting sensitive information.

Believing the message is legitimate, the employee unknowingly provides confidential data.

5. Financial Fraud and Investment Scams

Deepfake videos have been used to impersonate financial experts or executives promoting fraudulent investments.

Victims are convinced by realistic videos and voice recordings.

This tactic is becoming increasingly common in cryptocurrency scams and online investment fraud.

The Impact of Deepfake Cyber Attacks

Deepfake attacks can cause severe financial and reputational damage.

Financial Loss

Organizations may lose millions through fraudulent transactions or scams.

Data Breaches

Attackers may gain access to confidential information through manipulated authentication systems.

Reputation Damage

Deepfake videos targeting executives or companies can spread quickly across social media platforms.

Loss of Customer Trust

When customers see fake content involving a company, it can erode trust in the brand.

Warning Signs of Deepfake Attacks

Detecting deepfakes can be difficult, but certain indicators may reveal manipulated content.

Unnatural Facial Movements

Deepfake videos sometimes show subtle inconsistencies in:

-

Facial expressions

-

Eye movements

-

Lip synchronization

Voice Irregularities

AI-generated voices may sound realistic but sometimes lack natural speech patterns.

Unusual Requests

If someone requests sensitive information or urgent financial transfers unexpectedly, verify the request.

Suspicious Context

Always question unexpected video messages or calls involving confidential actions.

How Organizations Can Prevent Deepfake Attacks

Preventing deepfake cybercrime requires a combination of technology, awareness, and security policies.

1. Implement Multi-Factor Authentication (MFA)

MFA adds an additional layer of protection.

Even if attackers impersonate someone using deepfake technology, MFA prevents unauthorized access.

MFA Examples

-

Authentication apps

-

Hardware tokens

-

SMS verification

-

Biometric verification

2. Establish Verification Protocols

Organizations should create verification procedures for sensitive requests.

Best Practice

Require secondary confirmation before:

-

Financial transfers

-

Data access

-

System changes

Employees should confirm requests through a separate communication channel.

3. Train Employees to Recognize Deepfake Threats

Security awareness training helps employees identify suspicious communications.

Training should include:

-

Deepfake attack examples

-

Social engineering awareness

-

Reporting procedures

Human vigilance remains one of the strongest defenses.

4. Use AI-Based Deepfake Detection Tools

Cybersecurity technologies are evolving to detect synthetic media.

These tools analyze content for:

-

Facial inconsistencies

-

Voice manipulation

-

AI-generated artifacts

Organizations can deploy such tools to monitor suspicious media.

5. Strengthen Cybersecurity Infrastructure

A strong cybersecurity strategy helps prevent deepfake attacks from escalating.

Security teams should implement:

-

Endpoint protection

-

Network monitoring

-

Access controls

-

Threat intelligence platforms

Modern cybersecurity solutions help identify and stop suspicious activities early.

The Future of Deepfake Cybercrime

As artificial intelligence continues to evolve, deepfake attacks will become more realistic and more difficult to detect.

Cybersecurity experts predict:

-

Increased use of deepfake audio scams

-

Sophisticated AI-driven impersonation attacks

-

Automated disinformation campaigns

Organizations must stay ahead of these threats by investing in advanced cybersecurity defenses and employee awareness programs.

Frequently Asked Questions (FAQ)

What are deepfake attacks in cybercrime?

Deepfake attacks use AI-generated videos, images, or voice recordings to impersonate individuals and deceive victims for financial fraud, identity theft, or misinformation.

Why are deepfakes dangerous for businesses?

Deepfakes can enable attackers to impersonate executives, bypass identity verification systems, and manipulate employees into revealing sensitive information.

How can companies detect deepfake attacks?

Organizations can detect deepfakes using AI detection tools, employee awareness training, and verification protocols for sensitive communications.

Are deepfake attacks common?

Yes. Deepfake attacks are increasing as AI tools become more accessible, and cybercriminals continue to exploit them for fraud and social engineering.

How can individuals protect themselves from deepfake scams?

Individuals should verify suspicious requests, avoid sharing sensitive data online, enable multi-factor authentication, and stay informed about emerging cyber threats.

Protect Your Organization from Advanced Cyber Threats

Deepfake attacks represent one of the fastest-growing threats in modern cybercrime. As artificial intelligence evolves, organizations must adopt stronger cybersecurity strategies to defend against these sophisticated attacks.

Advanced security platforms can help detect suspicious activity, prevent unauthorized access, and protect critical systems from emerging threats.

👉 Request a demo today:

https://www.xcitium.com/request-demo/

Discover how modern cybersecurity solutions can help your organization stay ahead of evolving cyber threats and safeguard your digital infrastructure.

Please give us a star rating based on your experience.